AI Schools Are Selling a Dangerous Fantasy

Their most insidious “innovation” has probably already infiltrated your school. Here’s how to fight back.

It was a quiet Sunday afternoon when I found myself casually scrolling education job boards, wondering what else a washed-up old teacher might do with his life.

I expected the usual: curriculum, coaching, maybe even a cushy crossing-guard coordinator gig.

Instead, one company, clearly on a hiring spree, dominated the board: Alpha Schools.

Oddly, none of the jobs were for teachers. Every listing invited me to join a “learning rebellion” that “doesn’t play by the old rules.”

Hell yeah, I thought. A rebellion with comprehensive benefits.

Naturally, it wasn’t quite that simple.

Selling Utopia

“They’re learning twice as fast.”

That’s the promise of MacKenzie Price, founder of the Alpha Schools—born in Austin and now expanding anywhere with enough tech-minded millionaires to fill the $40,000/year seats.

“2 hour learning” is Alpha’s trademark. Two hours to, as Price says, “crush our academics.” Four recesses. A lineup of sleek workshops in “21st-century skills” like coding, public speaking, and entrepreneurship. And no teachers—just “guides,” paid handsomely enough to attract impressive resumés.

Parents are eating it up. Price is a social-media star with more than a million Instagram followers, a podcast, and an endless queue of glowing interviews.

The pitch is irresistible—Hogwarts vibes with a venture-capital twist, and more Starship Enterprise than schoolhouse.

And, they insist, the kids really are learning. Twice as fast!

It’s a utopia on paper—but behind the promise lurks a somewhat stickier question: learning twice as fast… at what?

Fueled by Discontent

Parents have real reasons to be frustrated with the status quo.

Too many schools are inefficient and incoherent. Even wealthy suburban districts—often founded on the promise of “superior” schooling—aren’t delivering.

Enrollment is falling, families are defecting, and trust is eroding. Grade inflation, pandemic absenteeism, and the long tail of failed movements like balanced literacy have pushed achievement into steep decline.

Even wealthy communities “are barely keeping pace with the typical student in the average developed country.”

- Education Next

Meanwhile, well-intentioned but performative district policies—like San Francisco’s decade-long ban on middle school algebra in the name of “equity”—make education feel “zero-sum” and signal to many parents that schools aren’t really in it for their kids.

The result is a broad disillusionment with public education. In that context, Alpha’s promise doesn’t sound like a gimmick; it sounds like a cure.

Bending under Scrutiny

As Alpha’s footprint has grown, so has the spotlight. WIRED Magazine published a detailed exposé last month that raises plenty of issues, but two questions linger for me: what students actually do during “academics,” and what they actually learn from it.

Neil Selwyn, education professor at Monash University and author of Should Robots Replace Teachers?, notes:

Alpha’s trust in software-enabled repetition is typical of ventures started by people who were self-taught in coding or engineering—but they didn’t learn history, poetry, or the humanities that way.”

It’s no shock that tech founders built a school in their own image, favoring math and engineering over the messy, language-driven work of reading and writing.

But without that foundation, no student will be college- or career-ready, no matter how many “Managing an Airbnb” workshops they complete in sixth grade.

An example of a parent’s story in WIRED makes the stakes painfully clear: her eight-year-old left Alpha able to read words quickly but not understand them, and writing at a kindergarten level.

These aren’t anomalies; they’re the predictable outcomes of outsourcing reading, writing, and thinking to an algorithm.

To see why, it’s worth looking at what those “2 hour” academics actually involve.

A “Drill and Kill” for the 21st Century

We’ve already discussed what makes up high-quality reading instruction: reading whole books, building background knowledge and schema, fluency practice, structured academic talk, and, of course, annotating texts.

But if your goal is to “crush academics in two hours,” there’s little space for any of that.

What fills the gap is not mysterious. Algorithmic learning programs with “adaptive pathways” — like i-Ready MyPath, Amplify mCLASS, Achieve3000, and DreamBox —form the backbone of what Alpha brands as “personalized AI learning.”

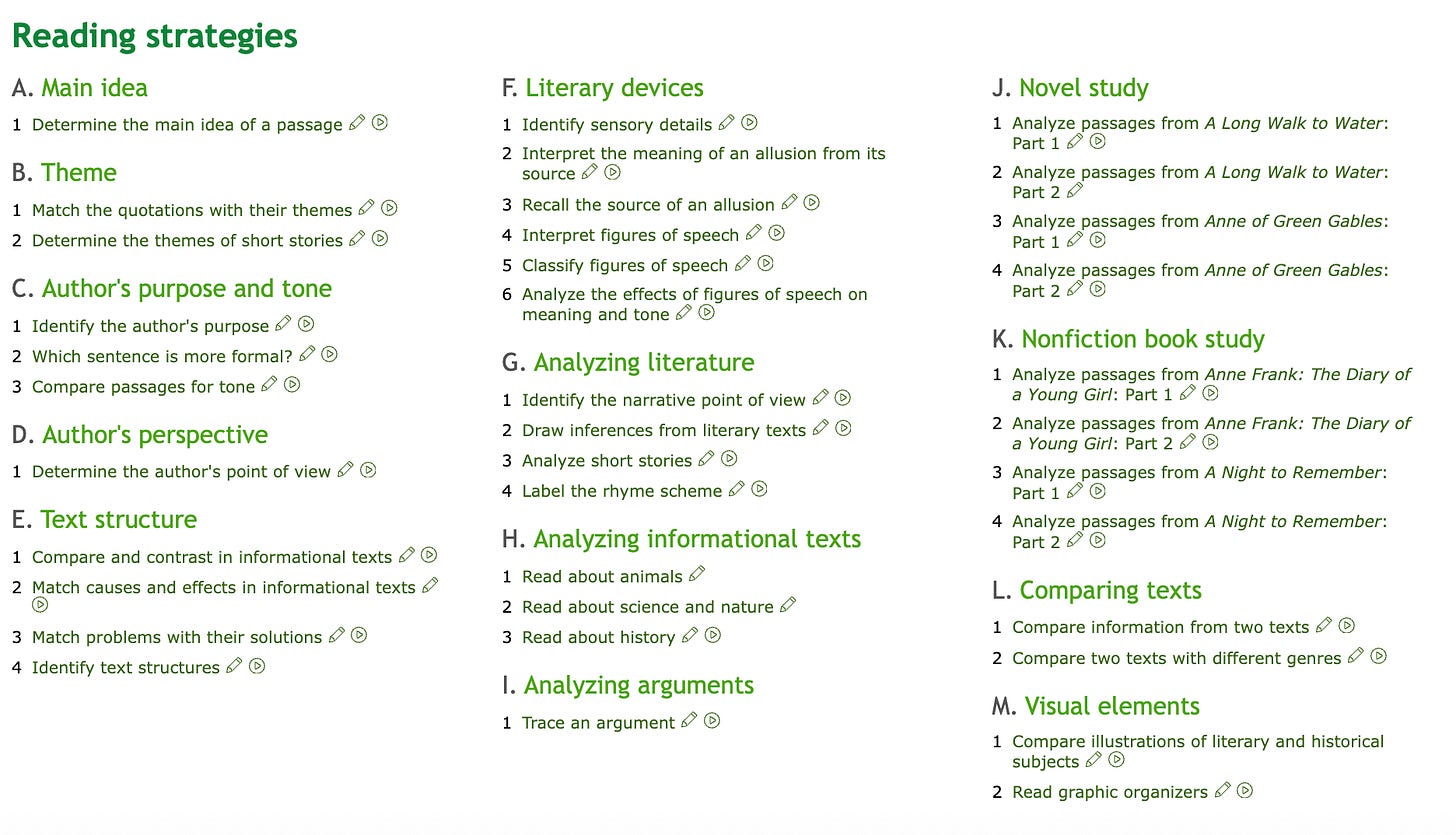

And the beating heart of Alpha’s “instruction,” as WIRED reported, has been IXL Learning.1

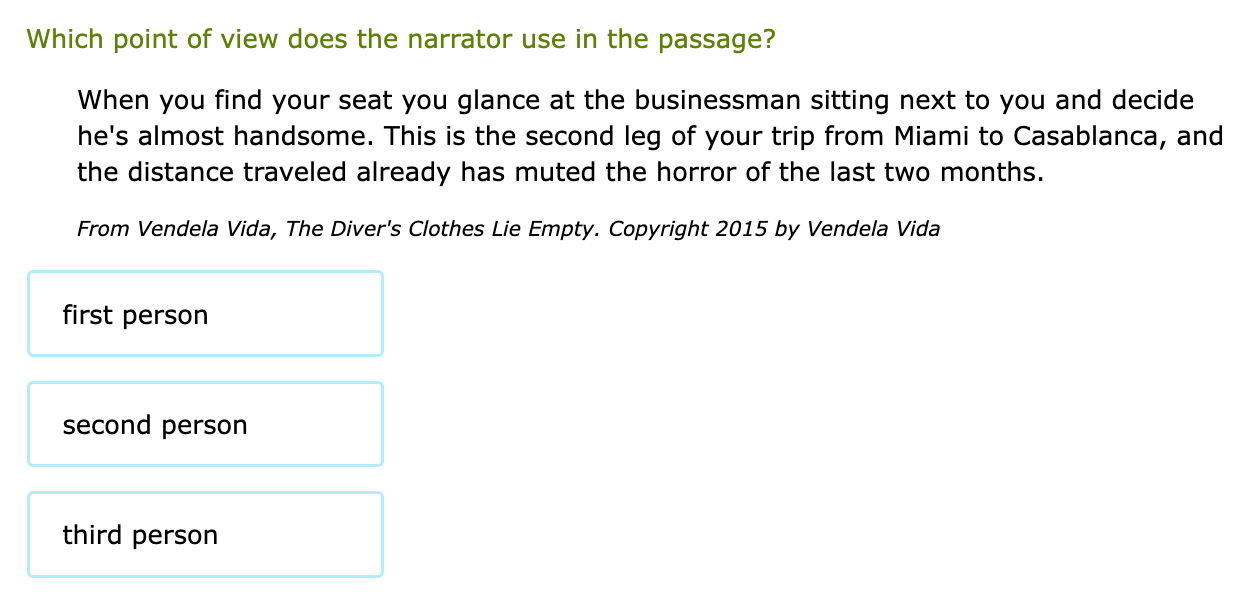

If you’re a seventh grader, here’s what that looks like: you log in, you see a grid of skills, and you click through a sequence of micro-tasks.

Under “Analyzing Literature” you might answer a question about a short, disposable snippet of text—sometimes solvable by reading only a single word.2

The program is “adaptive,” in theory: its diagnostic figures out which skills a student hasn’t yet “mastered” and then assigns more practice on those exact skills.

But this is where the model collapses. The cognitive work that builds real thinking — constructing an argument, writing a coherent essay, conducting a science lab, academic discourse — cannot be automated, nor can it be isolated into bite-sized skills.

So how are students “growing twice as fast” if this is the core academic experience? The answer lies, in part, in how the learning is being measured.

The MAP Mirage

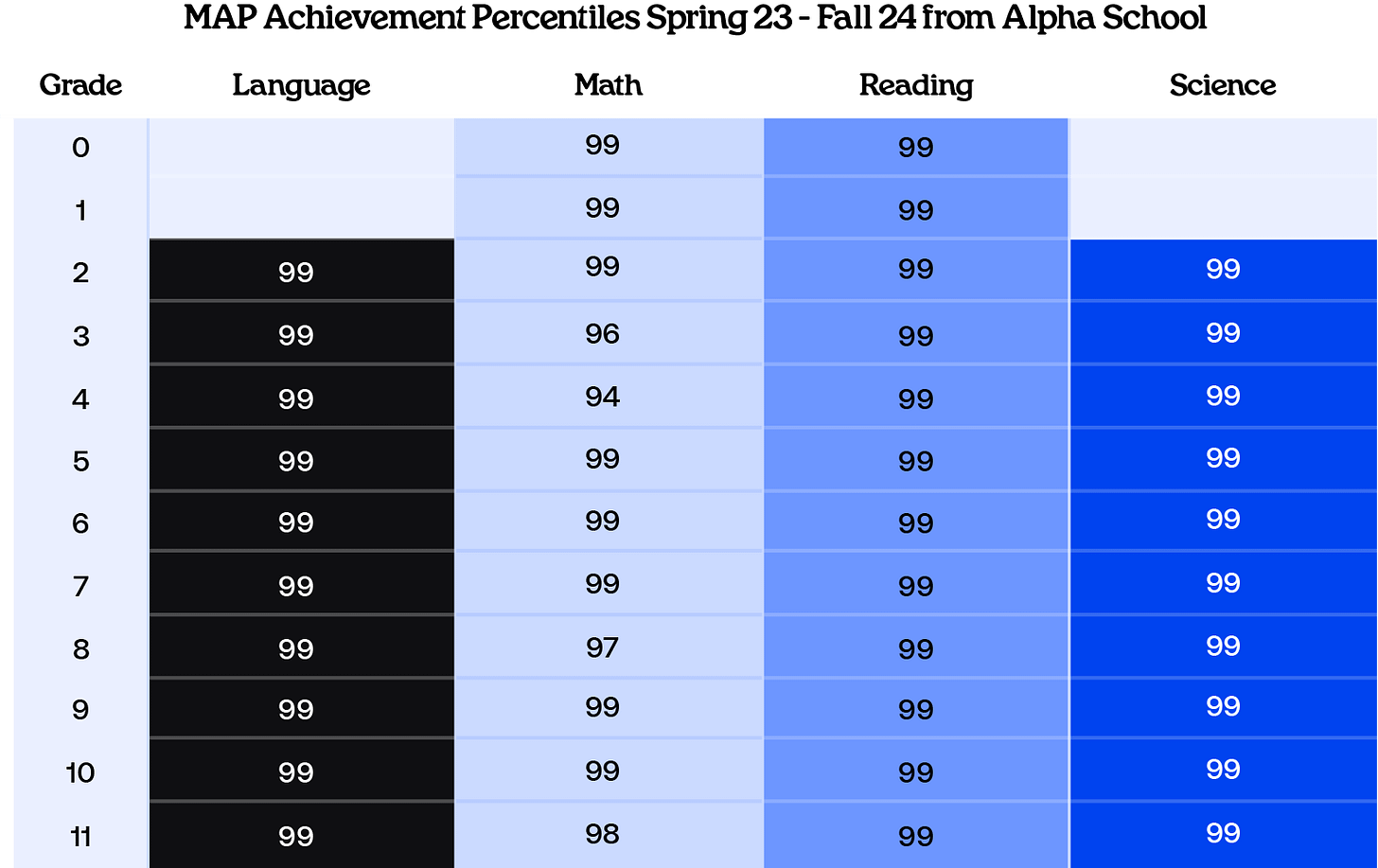

A confession: I’m a data freak, and I take growth measures seriously. So when Price flashed graphics showing Alpha students in the 99th percentile of growth every year—in nearly every subject and every grade—I gasped. That’s an extraordinary claim.

But anyone who’s lived inside a classroom knows how slippery adaptive data can be. A low-investment fall test can make spring growth look miraculous. An unfocused testing environment can tank a score. And MAP, for all its usefulness, has two structural weaknesses: it contains no writing, and it’s far simpler and shorter than state tests.

Add up multiple retakes within the testing window, cash incentives for kids who hit targets, and the predictable “low effort in fall = big gains in spring” dynamic (which students learn quickly—especially when money’s involved), and the 99th-percentile gains start to look a little less magical.

“We pay every kid at Alpha $1,000 if they get to the top 1%.”

- Joe Liemandt, billionaire Alpha Schools investor

Tellingly, Alpha’s parent company declined to provide its MAP data to WIRED for analysis. You can imagine that explaining the messiness of multiple retakes and fall-score tanking might be inconvenient to the narrative.

Despite all this, you could write Alpha off as a faddish school for the rich.

But then you’d miss the real story: that same adaptive-learning logic has already seeped into public schools—and it’s quickly accelerating.

The Fallacy of Adaptive Learning

As part of her pitch, Price leans on a narrative that has already caught fire with school leaders:

“What our team of scientists and software developers have built is like a CAT scan of a child’s brain—showing what they know, what they don’t, and how we can fill the gaps to mastery.”

It’s a compelling metaphor, but hardly a new one. As I wrote last month, this view of literacy as a collection of small, “masterable” skills has shaped early and middle-grade instruction for more than a decade—and contributed mightily to the wider reading collapse.

In other words: what Alpha is doing isn’t innovative. It’s a hyped-up mirror of what districts are already implementing.

Take my own district: we were encouraged to dedicate an entire class period to i-Ready MyPath, replacing teacher-led Wednesday reading instruction with adaptive computer lessons.

The rationale was identical to Price’s: “strong research,” “personalized learning,” “massive gains.”

And i-Ready does call itself research-based. But the strongest studies are vendor-funded or produced by organizations tied to the publisher.

A recent review of adaptive-learning systems noted that many evaluations report gains only on in-system or course tests, with much more mixed evidence on external exams such as state or national assessments.

Even in vendor-funded research, the story is underwhelming. A Johns Hopkins study commissioned by Curriculum Associates found that school-wide i-Ready use did not significantly raise state ELA scores; at best, heavy users saw a tiny gain of a few scale-score points after hundreds of minutes online.

Tl;dr: heavy doses of i-Ready practice make you better at the i-Ready test.

What it does beyond that remains far murkier.

Cheap, Scalable, and Wrong

So why doesn’t any of this slow districts down?

Because algorithmic learning is cheap and easy to implement. A district choosing between hiring reading interventionists or buying a district-wide adaptive program knows exactly which option fits inside a crowded budget.

Add growing parent demand for “AI-enhanced learning,” and the sales pitch practically writes itself.

Meanwhile, carving out minutes in the schedule adds up fast. Even 10 minutes a day totals up to 30 hours a year—roughly six full weeks of ELA class—not spent on the irreplaceable work of knowledge-building, writing, and reading whole-class texts.

Beating Back the Tide

So how do we fight back the adaptive-learning creep—especially when the stakes for real curriculum implementation are so high?

Three starting points:

1️⃣ Be loudly skeptical of mandatory adaptive blocks—especially as “intervention.”

If your school wants to carve out explicit minutes for algorithmic skill practice, ask why. Ask what evidence supports it. And most importantly, ask what students will no longer be doing during that time.

2️⃣ Come armed with research—and persuade the adults closest to you.

The narrative that these platforms are “research-proven” is sticky but inaccurate.3 Admin teams often have flexibility in how to interpret district mandates. Re-establish reading—and intervention—as knowledge-building, whole-book reading, writing, fluency, and structured talk, not isolated micro-skills.

3️⃣ Show what responsible AI use actually looks like.

AI isn’t going away, and parents expect it to show up in classrooms. Use it where it actually helps: quick writing feedback cycles, stronger exemplars, sharper questions.

Use AI to elevate thinking—not to outsource it.

Where the real “ROI” lives

Research is remarkably consistent: a highly effective teacher (top quartile) can move students 10–20 percentile points on standardized assessments in a single year.

Most software—even when used “as intended”—shows 0–3 percentile points of impact on those same assessments.

In other words: a great teacher may cost more, but delivers something like 10x the learning gains. When you look at impact per dollar, teachers aren’t expensive—they’re a bargain.

So if we truly want students learning “twice as fast,” we don’t need to replace teachers with algorithms.

Real acceleration comes from people, not programs.

And the future of learning isn’t artificial.

It’s human.

Worth noting: IXL says that it “terminated” its contract with Alpha, but Price continues referencing using it as “one of 20 AI programs” in use at Alpha in recent Instagram comments.

Author’s note: I IMPLORE you to browse IXL’s 12th-grade ELA skills if you want a taste of what counts as “rigor” in this world. You don’t need to be a literacy expert to sense the limits — or feel a little embarrassed for people paying private school tuition for their kids to do, well… this.

How could this approach be any more misinformed about the cognitive science of deep learning? I won’t even get started about the social and moral development issues here…

Luke - I enjoyed this post. I wrote about Alpha a month or so back. They've just opened a new school in Manhattan and I am interested to see how it does. NYC families will be quick to pull their kids if it doesn't seem to be delivering. But this was very useful and I had similar questions about it's AI promises, especially when it comes to writing and reading.

https://fitzyhistory.substack.com/p/this-school-doesnt-use-grades-teachers